$ crs_stat -t

Name Type Target State Host

————————————————————

ora.DATA.dg ora….up.type ONLINE ONLINE ol5-…rac1

ora….ER.lsnr ora….er.type ONLINE ONLINE ol5-…rac1

ora….N1.lsnr ora….er.type ONLINE ONLINE ol5-…rac1

ora.asm ora.asm.type ONLINE ONLINE ol5-…rac1

ora.eons ora.eons.type ONLINE ONLINE ol5-…rac1

ora.gsd ora.gsd.type OFFLINE OFFLINE

ora….network ora….rk.type ONLINE ONLINE ol5-…rac1

ora.oc4j ora.oc4j.type OFFLINE OFFLINE

ora….SM1.asm application ONLINE ONLINE ol5-…rac1

ora….C1.lsnr application ONLINE ONLINE ol5-…rac1

ora….ac1.gsd application OFFLINE OFFLINE

ora….ac1.ons application ONLINE ONLINE ol5-…rac1

ora….ac1.vip ora….t1.type ONLINE ONLINE ol5-…rac1

ora….SM2.asm application ONLINE ONLINE ol5-…rac2

ora….C2.lsnr application ONLINE ONLINE ol5-…rac2

ora….ac2.gsd application OFFLINE OFFLINE

ora….ac2.ons application ONLINE ONLINE ol5-…rac2

ora….ac2.vip ora….t1.type ONLINE ONLINE ol5-…rac2

ora.ons ora.ons.type ONLINE ONLINE ol5-…rac1

ora.rac.db ora….se.type ONLINE ONLINE ol5-…rac2

ora….rts.svc ora….ce.type ONLINE ONLINE ol5-…rac1

ora.scan1.vip ora….ip.type ONLINE ONLINE ol5-…rac1

Month: April 2013

Basic Clusterware Administration Commands

In the previous blog we talk about Oracle Clusterware basics and definitions. Now let’s talk about basic administration commands.

- olsnodes

The OLSNODES command provides the list of nodes and other information for all nodes participating in the cluster. The syntax for the OLSNODES command is:

OLSNODES command without any command parameters, the command returns a listing of the nodes in the cluster:# olsnodes

node1

node2

node3

node4

OLSNODES command to obtain additional cluster-related information.| Option | Description |

|---|---|

-g |

Logs cluster verification information with more details.

|

-i |

Lists all nodes participating in the cluster and includes the Virtual Internet Protocol (VIP) address assigned to each node.

|

-l |

Displays the local node name.

|

-n |

Lists al nodes participating in the cluster and includes the assigned node numbers.

|

-p |

Lists all nodes participating in the cluster and includes the private interconnect assigned to each node.

|

-v |

Logs cluster verification information in verbose mode.

|

- ocrcheck

OCRCHECK utility is used to verify the OCR integrity. The OCRCHECK utility displays the version of the OCR’s block format, total space available and used space, OCRID, and the OCR locations that you have configured. OCRCHECK performs a block-by-block checksum operation for all of the blocks in all of the OCRs that you have configured. It also returns an individual status for each file as well as result for the overall OCR integrity check. The following is a sample of the OCRCHECK output:

# ./ocrcheck

Status of Oracle Cluster Registry is as follows :

Version : 3

Total space (kbytes) : 262120

Used space (kbytes) : 2528

Available space (kbytes) : 259592

ID : 1197072699

Device/File Name : +DATA

Device/File integrity check succeeded

Device/File not configured

Device/File not configured

Device/File not configured

Device/File not configured

Cluster registry integrity check succeeded

Logical corruption check succeeded

Note: Run this command as root in order to enable the Cluster registry integrity check, if you run this command with a non-privileged user this will be bypassed.

- ocrcheck -local

In Oracle Clusterware 11g release 2 (11.2), each node in a cluster has a local registry for node-specific resources, called an Oracle Local Registry (OLR), that is installed and configured when Oracle Clusterware installs OCR. Multiple processes on each node have simultaneous read and write access to the OLR particular to the node on which they reside, regardless of whether Oracle Clusterware is running or fully functional.

By default, OLR is located at Grid_home/cdata/host_name.olr on each node. Manage OLR using the OCRCHECK, OCRDUMP, and OCRCONFIG utilities as root with the -local option.

# ./ocrcheck -local

Status of Oracle Local Registry is as follows :

Version : 3

Total space (kbytes) : 262120

Used space (kbytes) : 2184

Available space (kbytes) : 259936

ID : 1563540278

Device/File Name : /u01/app/11.2.0/grid/cdata/ol5-112-rac1.olr

Device/File integrity check succeeded

Local registry integrity check succeeded

Logical corruption check succeeded

- crsctl

CRSCTL is an interface between you and Oracle Clusterware, parsing and calling Oracle Clusterware APIs for Oracle Clusterware objects.

Oracle Clusterware 11g release 2 (11.2) introduces cluster-aware commands with which you can perform check, start, and stop operations on the cluster. You can run these commands from any node in the cluster on another node in the cluster, or on all nodes in the cluster, depending on the operation.

CRSCTL documentation is quite extensive and can be found in the below link:

(http://docs.oracle.com/cd/E11882_01/rac.112/e16794/crsref.htm)

CRSCTL can be also used to check the state of the Voting disks:

# ./crsctl query css votedisk

## STATE File Universal Id File Name Disk group

— —– —————– ——— ———

1. ONLINE 911b058da40b4f96bf456abb771dddc8 (/dev/oracleasm/disks/DISK1) [DATA]

Located 1 voting disk(s).

Thanks,

Alfred

- ocrcheck -local

In Oracle Clusterware 11g release 2 (11.2), each node in a cluster has a local registry for node-specific resources, called an Oracle Local Registry (OLR), that is installed and configured when Oracle Clusterware installs OCR. Multiple processes on each node have simultaneous read and write access to the OLR particular to the node on which they reside, regardless of whether Oracle Clusterware is running or fully functional.

By default, OLR is located at Grid_home/cdata/host_name.olr on each node. Manage OLR using the OCRCHECK, OCRDUMP, and OCRCONFIG utilities as root with the -local option.

# ./ocrcheck -local

Status of Oracle Local Registry is as follows :

Version : 3

Total space (kbytes) : 262120

Used space (kbytes) : 2184

Available space (kbytes) : 259936

ID : 1563540278

Device/File Name : /u01/app/11.2.0/grid/cdata/ol5-112-rac1.olr

Device/File integrity check succeeded

Local registry integrity check succeeded

Logical corruption check succeeded

- crsctl

CRSCTL is an interface between you and Oracle Clusterware, parsing and calling Oracle Clusterware APIs for Oracle Clusterware objects.

Oracle Clusterware 11g release 2 (11.2) introduces cluster-aware commands with which you can perform check, start, and stop operations on the cluster. You can run these commands from any node in the cluster on another node in the cluster, or on all nodes in the cluster, depending on the operation.

CRSCTL documentation is quite extensive and can be found in the below link:

(http://docs.oracle.com/cd/E11882_01/rac.112/e16794/crsref.htm)

CRSCTL can be also used to check the state of the Voting disks:

# ./crsctl query css votedisk

## STATE File Universal Id File Name Disk group

— —– —————– ——— ———

1. ONLINE 911b058da40b4f96bf456abb771dddc8 (/dev/oracleasm/disks/DISK1) [DATA]

Located 1 voting disk(s).

Thanks,

Alfred

Oracle Clusterware 11gR2 Basics

In this blog will talk about Oracle Clusterware basics. It is really needed to know the basics before we can start talking about Clusterware installation, configuration and administration. The below information is an extract from the Oracle Clusterware, Administration and Deployment Guide 11g Release 2 (11.2) (http://docs.oracle.com/cd/E14072_01/rac.112/e10717.pdf).

What is Oracle Clusterware?

Oracle Clusterware enables servers to communicate with each other, so that they

appear to function as a collective unit. This combination of servers is commonly

known as a cluster. Although the servers are standalone servers, each server has

additional processes that communicate with other servers. In this way the separate

servers appear as if they are one system to applications and end users.

Oracle Clusterware provides the infrastructure necessary to run Oracle Real

Application Clusters (Oracle RAC). Oracle Clusterware also manages resources, such

as virtual IP (VIP) addresses, databases, listeners, services, and so on. These resources

are generally named ora.resource_name.host_name. Oracle does not support

editing these resources except under the explicit direction of Oracle support.

Additionally, Oracle Clusterware can help you manage your applications.

Oracle Clusterware has two stored components, besides the binaries: The voting disk

files, which record node membership information, and the Oracle Cluster Registry

(OCR), which records cluster configuration information. Voting disks and OCRs must

reside on shared storage available to all cluster member nodes.

Oracle Clusterware uses voting disk files to provide fencing and cluster node

membership determination. The OCR provides cluster configuration information. You can place the Oracle Clusterware files on either Oracle ASM or on shared common

disk storage. If you configure Oracle Clusterware on storage that does not provide file

redundancy, then Oracle recommends that you configure multiple locations for OCR

and voting disks. The voting disks and OCR are described as follows:

■ Voting Disks

Oracle Clusterware uses voting disk files to determine which nodes are members

of a cluster. You can configure voting disks on Oracle ASM, or you can configure

voting disks on shared storage.

If you configure voting disks on Oracle ASM, then you do not need to manually

configure the voting disks. Depending on the redundancy of your disk group, an

appropriate number of voting disks are created.

If you do not configure voting disks on Oracle ASM, then for high availability,

Oracle recommends that you have a minimum of three voting disks on physically

separate storage. This avoids having a single point of failure. If you configure a

single voting disk, then you must use external mirroring to provide redundancy.

You should have at least three voting disks, unless you have a storage device, such

as a disk array that provides external redundancy. Oracle recommends that you do

not use more than five voting disks. The maximum number of voting disks that is

supported is 15.

■ Oracle Cluster Registry

Oracle Clusterware uses the Oracle Cluster Registry (OCR) to store and manage

information about the components that Oracle Clusterware controls, such as

Oracle RAC databases, listeners, virtual IP addresses (VIPs), and services and any

applications. The OCR stores configuration information in a series of key-value

pairs in a tree structure. To ensure cluster high availability, Oracle recommends

that you define multiple OCR locations (multiplex). In addition:

– You can have up to five OCR locations

– Each OCR location must reside on shared storage that is accessible by all of the

nodes in the cluster

– You can replace a failed OCR location online if it is not the only OCR location

– You must update the OCR through supported utilities such as Oracle

Enterprise Manager, the Server Control Utility (SRVCTL), the OCR

configuration utility (OCRCONFIG), or the Database Configuration Assistant

(DBCA)

Oracle Clusterware Processes on Linux and UNIX Systems

Oracle Clusterware processes on Linux and UNIX systems include the following:

■ crsd: Performs high availability recovery and management operations such as

maintaining the OCR and managing application resources. This grid infrastructure

process runs as root and restarts automatically upon failure.

When you install Oracle Clusterware in a single-instance database environment

for Oracle ASM and Oracle Restart, ohasd manages application resources and

crsd is not used.

■ cssdagent: Starts, stops, and checks the status of the CSS daemon, ocssd. In

addition, the cssdagent and cssdmonitor provide the following services to

guarantee data integrity:

– Monitors the CSS daemon; if the CSS daemon stops, then it shuts down the

node

– Monitors the node scheduling to verify that the node is not hung, and shuts

down the node on recovery from a hang.

oclskd: (Oracle Clusterware Kill Daemon) CSS uses this daemon to stop

processes associated with CSS group members for which stop requests have come

in from other members on remote nodes.

■ ctssd: Cluster time synchronization service daemon: Synchronizes the time on all

of the nodes in a cluster to match the time setting on the master node but not to an

external clock.

■ diskmon (Disk Monitor daemon): Monitors and performs I/O fencing for HP

Oracle Exadata Storage Server storage. Because Exadata storage can be added to

any Oracle RAC node at any time, the diskmon daemon is always started when

ocssd starts.

■ evmd (Event manager daemon): Distributes and communicates some cluster

events to all of the cluster members so that they are aware of changes in the

cluster.

evmlogger (Event manager logger): This is started by EVMD at startup. This

reads a configuration file to determine what events to subscribe to from EVMD

and it runs user defined actions for those events. This facility maintains backward

compatibility only.

■ gpnpd (Grid Plug and Play daemon): Manages distribution and maintenance of

the Grid Plug and Play profile containing cluster definition data.

■ mdnsd (Multicast Domain Name Service daemon): Manages name resolution and

service discovery within attached subnets.

■ ocssd (Cluster Synchronization Service daemon): Manages cluster node

membership and runs as the oracle user; failure of this process results in a node

restart.

■ ohasd (Oracle High Availability Services daemon): Starts Oracle Clusterware

processes and also manages the OLR and acts as the OLR server.

In a cluster, ohasd runs as root. However, in an Oracle Restart environment,

where ohasd manages application resources, it runs as the oracle user

Next blog -> Basic Clusterware Administration Commands

Thanks,

Alfred

Cleanup After Failed Installation Oracle Clusterware 11gR2

In this blog I will talk about how to cleanup a failed installation of the Oracle Clusterware 11gR2 in one particular node. I was installing the new Oracle Clusterware 11.2.0.1 in my home lab by follwing Tom Kyte’s instructions (http://www.oracle-base.com/articles/11g/oracle-db-11gr2-rac-installation-on-oel5-using-virtualbox.php#install_grid_infrastructure), then went through the part that the installer GUI needs us to run the root.sh scripts.

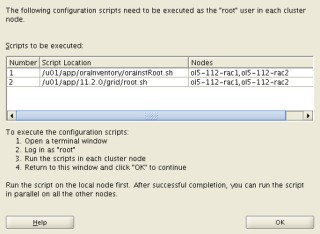

The easy thought was to run the root.sh script in both nodes of my RAC (RAC1 & RAC2) at the same time, without reading the explicit instructions “Run the script on the local node first. After successful completion, you can run the script in parallel on all other nodes.”:

The script run successful in the RAC1 node but in the RAC2 node. So when the script finished in RAC1 tried to run it again in RAC2, but the same results “the output was that the script was already ran”.

What to do next?, do I have to start over from scratch?

Surfing the web found a good & useful article from Guenadi Jilevski (http://gjilevski.com/2010/08/12/how-to-clean-up-after-a-failed-11g-crs-install-what-is-new-in-11g-r2-2/), here shows how to perform a manual cleanup in 11gR1, but also shows the new features and scripts in 11gR2.

Summarized steps:

Deconfigure Oracle Clusterware 11.2.x.x without removing the binaries:

- Log in as root user on the node you encountered the error. Change directory to $GRID_HOME/crs/install.

- Run rootcrs.pl with the -deconfig -force flags on the node you have the issue.

- If you are deconfiguring Oracle Clusterware on all the nodes in the cluster, then you have to add the -lastnode flag on the last one in order to deconfigure OCR and Voting disks.